ScarGuard: Vibecoding a Heron Deterrent That Actually Works

About a year ago, a great blue heron ate more than ten of my goldfish. I watched it happen - well, I watched the aftermath. One morning I walked out to the pond and the population had just... changed. Great blue herons are patient, methodical hunters. A single bird can empty a pond in a morning.

While a number of fish survived (including some of our favorites). One in particular left a mark, her name is Kroger - we'd adopted her in 2024 from my daughter's music teacher, who could no longer keep her in their aquarium. Before that, Kroger had been won at a fair. She'd already been rescued once.

In 2025 we moved her into a new 4,500-gallon pond - a huge upgrade from the roughly 300-gallon pond she'd been in. Then the heron came. One morning I found Kroger stranded on a rock at the pond's edge, barely in the water, beaten to hell. I poked her thinking she was dead. She flopped off into the depths. A few days later she surfaced again - slowly, covered in fuzz, but alive. The damage was so bad that it took us a several weeks to realize Kroger hadn't died.

She's still alive in 2026. Getting chased by the boys. We call her Scar now too, for obvious reasons.

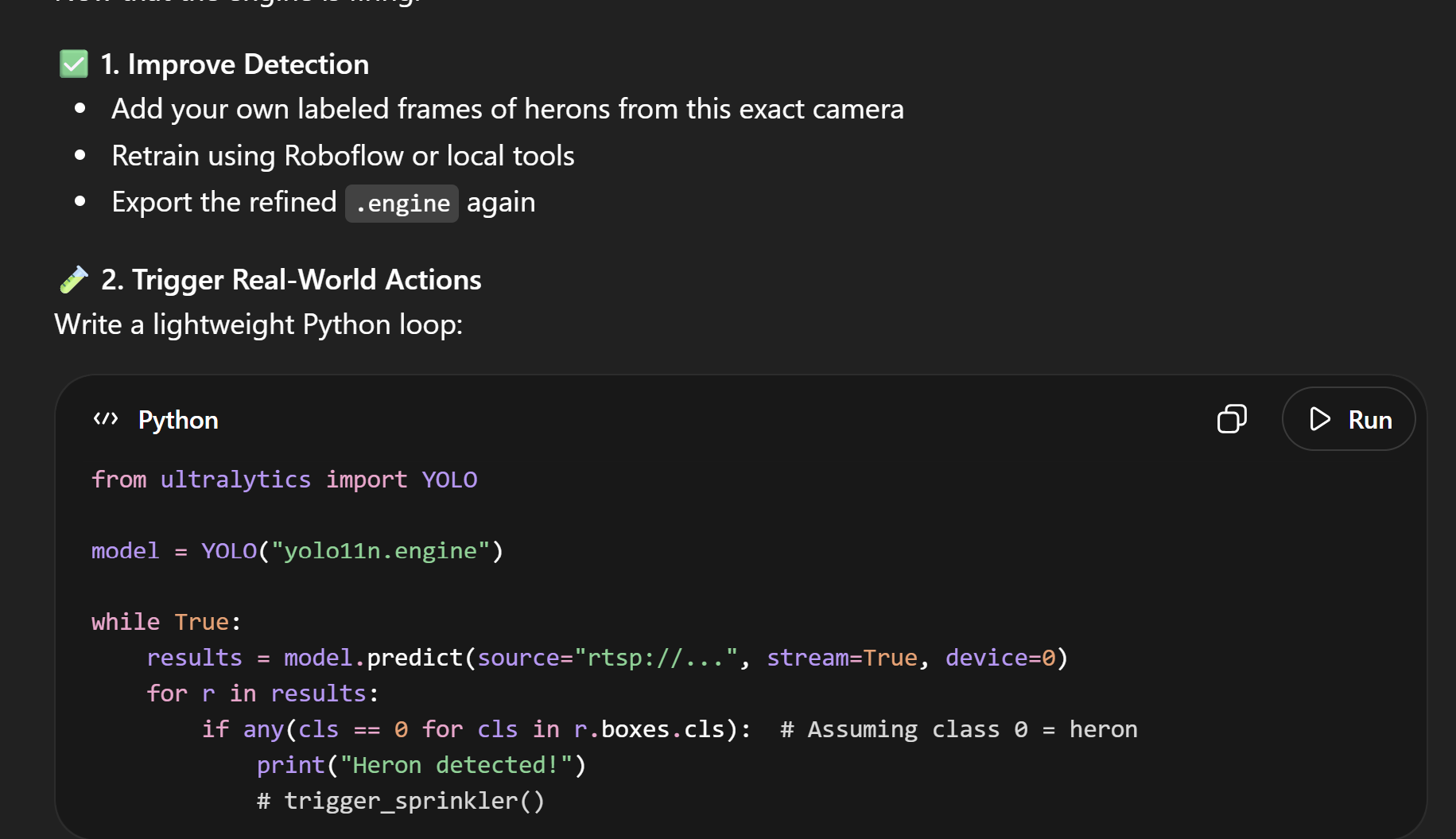

My immediate reaction was the obvious one: I can build something to stop this. I have a homelab. I have cameras already pointed at the pond. Some initial searches on Reddit and Koiphen didn't lead to much - I found several dead projects, but I figured this was surely solvable with a computer vision pipeline and some determination. So I started wrestling with ChatGPT, trying to piece together something that could watch the RTSP stream, detect a heron, and trigger a sprinkler.

I gave up. Early 2025-era AI tooling was good enough to hand you Python snippets and describe what you needed, but not quite good enough to walk you through integrating hardware, writing a real application, and getting the whole thing actually running when something went wrong. Every dead end felt like it needed three more dead ends to get past. I punted it.

The return

I picked it back up a few weeks ago with better tools, and it went differently. What I have running now is called ScarGuard - v0.13.1, named after the fish - and it's doing the thing I originally wanted it to do.

The detection engine is YOLO running on an NVIDIA Jetson Orin Nano. If you're not familiar with the Jetson line, it's NVIDIA's edge AI hardware - a small board with a real GPU designed for running inference locally. The Orin Nano is the entry-level version, which is still more compute than I strictly need for watching a pond, but it runs cool, draws modest power, and the ML ecosystem around it is solid. The system pulls a live RTSP feed from my UniFi cameras, samples frames, runs YOLO inference on the GPU, and when it sees a target above a configurable confidence threshold - something happens.

One thing worth being upfront about: the system isn't running a custom-trained heron model. It's running the stock COCO bird class from YOLOv8. I built a full Roboflow training pipeline into the project - dataset labeling, training, evaluation, model promotion - but I haven't actually needed to use it yet. The stock model is good enough. A generic "bird" detector running on consumer hardware catches herons reliably enough that it's triggering real deterrents and protecting real fish. That's probably the most honest advertisement for how far YOLO on a Jetson gets you out of the box. The custom model is on the roadmap when I decide I need better precision.

What "something happens" means

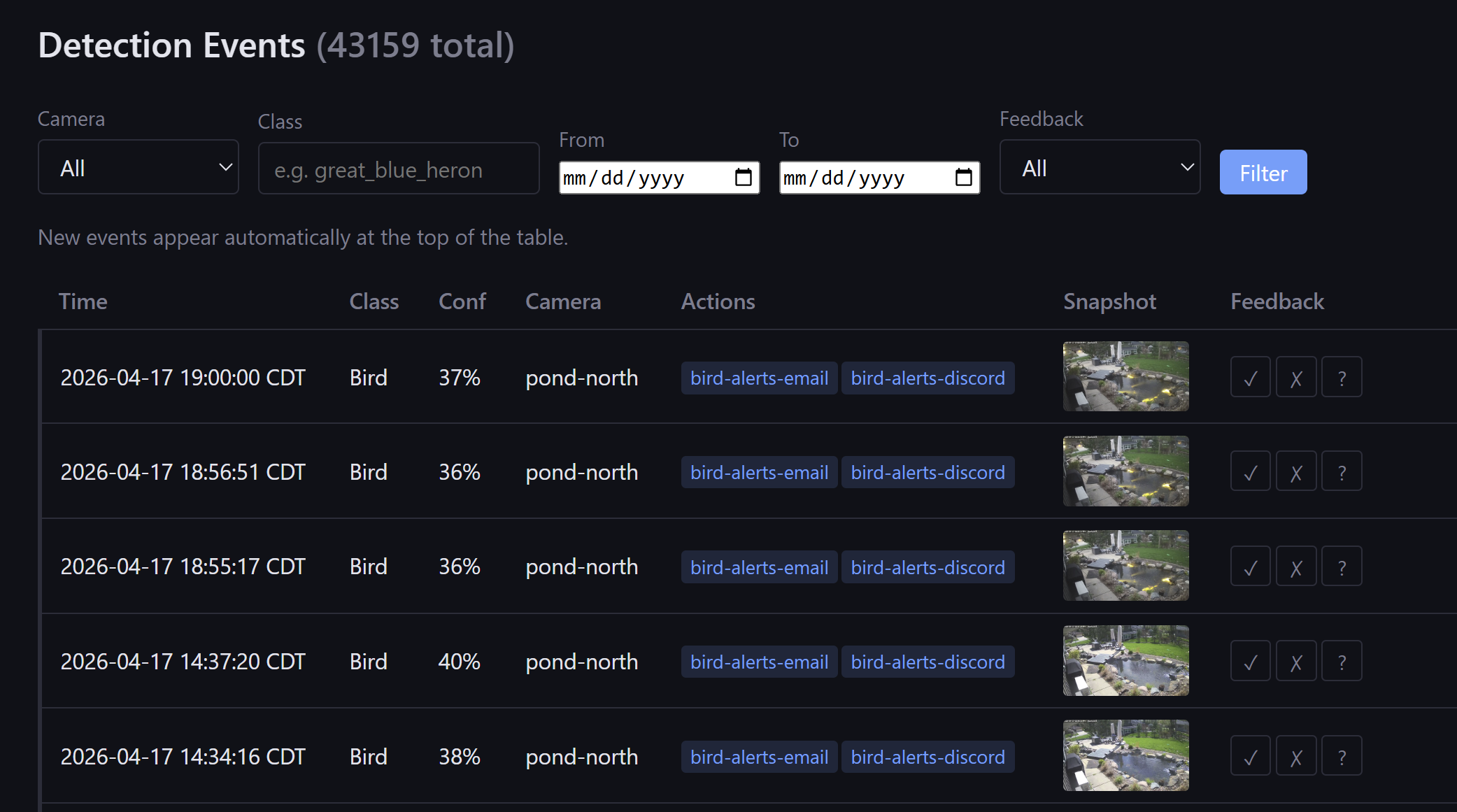

ScarGuard runs as seven Docker Compose services (redis, caddy, detector, web, notifier, deterrent, log-streamer). If you're familiar with Frigate, it's the closest analog - but Frigate is a general-purpose NVR with object detection built in, and it stops at notifications. ScarGuard is purpose-built for the full loop: detect, notify, and physically deter. I'm not aware of anything else that packages YOLO inference, Jetson GPU acceleration, and Tuya-based physical deterrence as a single deployable stack. When a heron is detected it can:

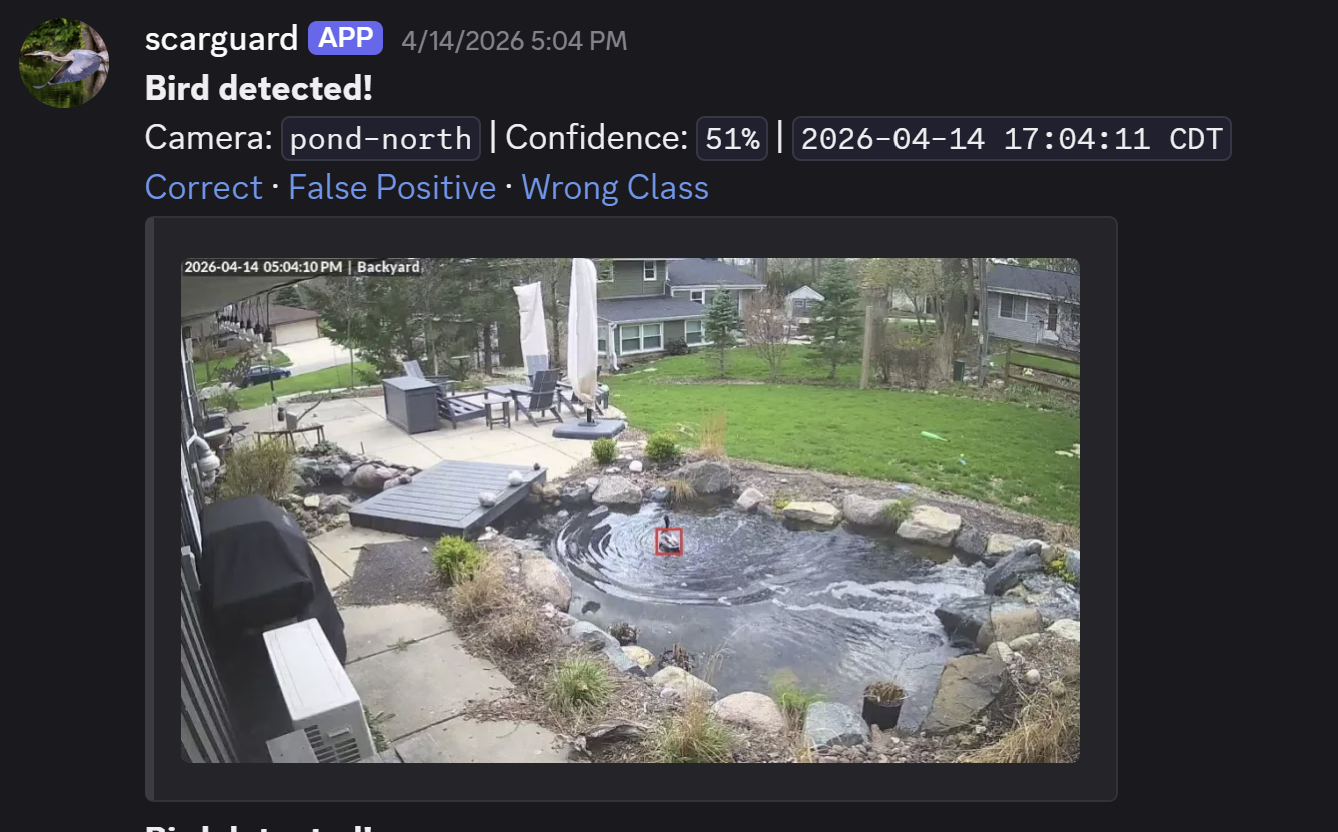

- Send a Discord notification with the detection frame attached

- Send an HTML email alert

- Push a notification via ntfy (self-hosted or ntfy.sh)

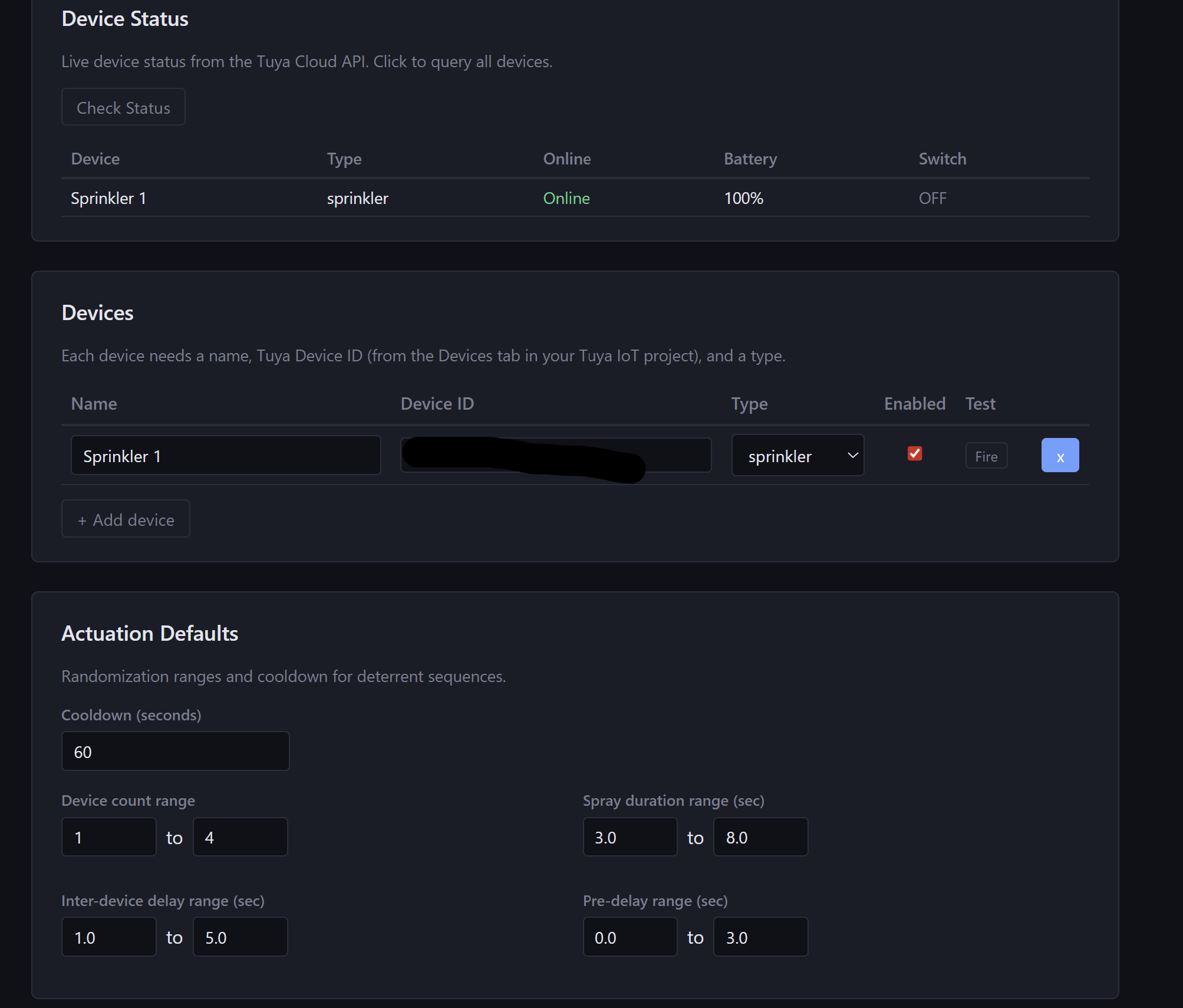

- Trigger physical deterrents via the Tuya Cloud API - sprinkler valves, lights, sirens, smart plugs

The Tuya integration is the part I'm most pleased with. Tuya is the cloud platform underneath a huge chunk of consumer smart home hardware - if you've got a smart plug that works with the Smart Life app, there's a good chance it's Tuya underneath.

The key design detail: deterrent firing is randomized in timing. Traditional deterrents - plastic owls, reflective tape, even fixed sprinklers - lose effectiveness quickly as wildlife habituates to them. What works is unpredictability. A response that varies, triggered only when a bird is actually there.

There's also a full web UI - event log with snapshot overlays, live feed, a config editor that hot-reloads without a container restart, system stats (CPU, GPU, RAM, per-camera FPS), and a deterrent actuation log with per-device test-fire buttons. Per-camera exclusion zones have been in since v0.4, which handles the obvious false-positive problem of having a plastic heron decoy sitting at the pond's edge.

The debugging interlude

One of the more satisfying moments in this project's history was the v0.12.7 inference performance investigation. Somewhere around v0.12.1 I'd fixed a non-root container issue, and the fix introduced an os.path.exists() call inside the inference loop - a one-liner that turned into an O(N) directory scan on every single frame. Inference time degraded noticeably, but the cause wasn't obvious. I spent a while chasing the wrong theories before running py-spy, which identified the culprit in about 30 seconds. One-line fix, recovered ~50ms per call, back to ~33 FPS on the Orin GPU.

That kind of story is why I wrote up a full INFERENCE_INVESTIGATION.md post-mortem in the repo. The problem wasn't complicated once I looked in the right place. It just took a while to figure out where to look.

Vibecoded, which means what exactly

I want to be honest about what this is. I didn't start this project knowing how to train a YOLO model or interface with the Tuya Cloud API. Large portions (all) of ScarGuard were written with AI tools - Claude Code, mostly - with me doing the direction, the debugging, and the "why is this still not working" investigation.

This is what "vibecoded" means to me: I had a clear problem, a clear outcome in mind, and enough technical context to keep the AI going in the right direction and recognize when it was going sideways. The code is real code that does real things. I'm not going to pretend I wrote every line from first principles, but I also know exactly what every part of it does, because I debugged all of it.

I'm aware "vibecoded" has become a bit of a pejorative lately - shorthand for slapping together something that technically runs but has no auth, no tests, no error handling, and will fall over the first time a real user touches it. I get why that reputation exists. But it doesn't have to be that way. ScarGuard has CodeQL scanning in CI, Redis authentication, non-root containers, thread-safe camera handling, and a proper audit log. I vibecoded it and it also has those things. The approach and the rigor aren't mutually exclusive - you just have to actually care about the second part.

What changed between the first attempt and this one was the tooling. Not my skill level, really - the AI got substantially better at holding context across a multi-component project, reasoning through problems that span multiple files, and catching its own mistakes. The gap between "ChatGPT hands you a Python snippet" and "Claude Code helps you build and debug a full application" turned out to be the difference between a project I abandoned and one that's running.

The project philosophy I wrote into the CLAUDE.md: "No over-engineering: this is a pond guardian, not a distributed platform. Keep it simple." That constraint has been useful. Every time I've been tempted to add something clever, I've gone back to that and punted it.

What's left

For my needs, it's pretty feature complete. The remaining roadmap is short:

- Hook up actual sprinklers. The Tuya integration works - I've tested it with a Smart Life sprinkler valve. The next step is hooking up four sprinklers in a way that blends in.

- Train a custom model. The stock COCO

birdclass has been surprisingly effective, but a model trained specifically on herons (and the other usual suspects - raccoons, ducks) would mean better precision and fewer false positives. The training pipeline is already in the repo; I just need to accumulate enough labeled detection frames to make it worthwhile. - Tweak and tune. Confidence thresholds, deterrent timing, exclusion zones, camera angles - the kind of iterative refinement that only comes from running it through more seasons.

The codebase has ambitions beyond what I strictly need - species-based response profiles, an ESP32 companion board for local actuation - but honestly, getting the sprinklers firing and the model trained is the finish line for "this does what I built it to do."

Kroger's vindication

The system has caught herons - just not with a sprinkler yet. The first real detection hit my phone as a Discord notification: great blue heron, bounding box, confidence score, snapshot attached. The sprinkler valve wasn't hooked up yet. So I called my neighbor, and his 12-year-old charged into my yard and scared it off.

The detection-to-notification pipeline worked exactly as designed. The physical deterrent just happened to be a kid.

Kroger is still swimming. So is the rest of the current population, which has grown considerably since the dark times. A fish that was won at a fair, rescued from an aquarium, moved into a real pond, mauled by a heron, and left for dead - still going.

The code is on GitHub at sentania-labs/scarguard if you want to dig into it.